Formalizing the Principle of Factiality

Part I: The Great Outdoors

The historical trajectory of modern philosophy is defined by a progressive retreat inward, a withdrawal triggered by a single logical failure. When David Hume formalized the problem of induction, he severed the necessary link between cause and effect, demonstrating that human reason cannot guarantee the future will resemble the past. By insisting that all knowledge stems exclusively from sensory experience, he proved the mind has no innate access to the hidden mechanisms of the external world. Any assumed connection between events is not a law of nature, but merely a psychological habit. This empirical rigor dismantled the rationalist assumption that human thought could transparently read the universe, effectively trapping the subject inside its own perceptions and permanently isolating it from the "Great Outdoors."

Hume dismissed traditional metaphysics as an "airy science" chasing ghosts. Because the true powers of nature remain entirely opaque to us, he confined his work to a "mental geography" of our own faculties (Hume 1748, 9). This structural ignorance creates a vulnerability in how we predict the future, striking at the very heart of inductive reasoning. Hume diagnosed a fatal logical flaw: without prior experience, the mind cannot deduce an effect from any given cause. Any assumed connection made a priori is entirely arbitrary (Hume 1748, 21–22). When we use past events to predict future outcomes, we rely on a viciously circular assumption—that the future will simply resemble the past. The argument depends solely on the very resemblance it is trying to establish (Hume 1748, 27). Hume concluded that our predictions are driven by mechanical custom, operating in a total absence of rational deduction (Hume 1748, 31–32).

Rescuing Newtonian physics from this skeptical void prompted a radical philosophical intervention. Awakened from his "dogmatic slumber" by Hume's empiricism, Immanuel Kant faced a terrifying prospect. A universe where causal laws are nothing more than psychological habits threatened to dissolve all scientific certainty. Seeking to bridge a paralyzing divide, he intervened in the debate between rationalists who believed reason could map the universe, and empiricists who trapped knowledge within immediate sensation.

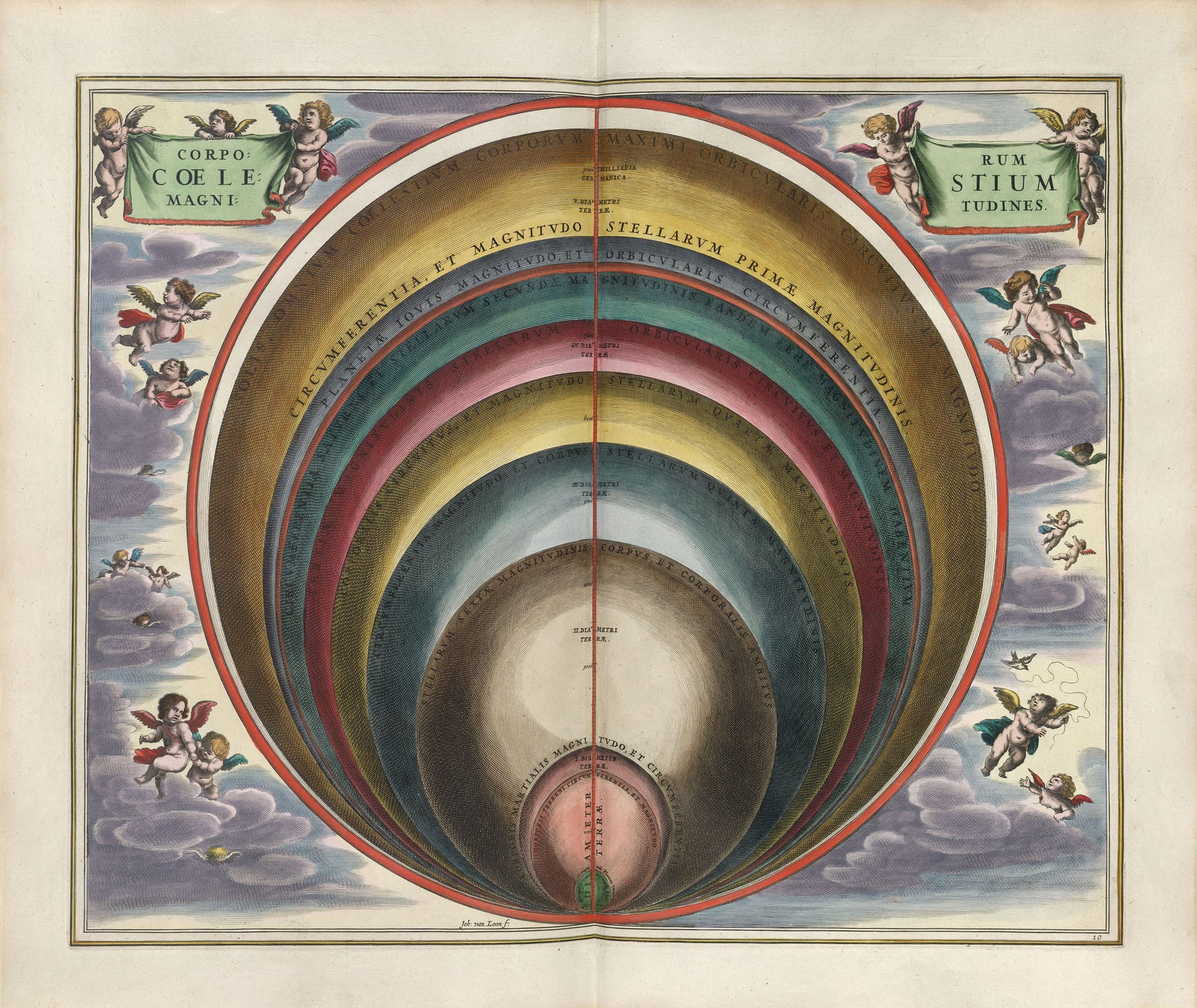

To survive Hume's earthquake, Kant built a cognitive fortress. While the Kantian Copernican Revolution is often celebrated as an awakening of human thought, this narrative obscures a heavy philosophical bargain. To save the necessity of physical laws, Kant traded away our access to the absolute, engineering a massive epistemological quarantine. He dictated that objects conform to the structure of the human mind, effectively building the world around the subject (Kant 1787, Bxvi). Kant constructed this "locked room" by arguing that raw sensory data—the "manifold of intuition"—necessitates active synthesis by our understanding to become a known object (Kant 1787, B137–138). To protect this space, he introduced the noumenon as a deliberate firewall. It acted as a boundary concept (Grenzbegriff) to prevent human reason from ever attempting to grasp "things-in-themselves" (Kant 1787, A255/B311).

Ultimately, this defensive architecture became an inescapable prison. Thought and being fused into a closed-circuit loop. By reducing the concept of the "world-whole" to an endless regression of cognitive synthesis (Kant 1787, A426/B454), Kant effectively outlawed the "Great Outdoors." His framework systematically absorbed the external universe, turning it into a projection of human cognitive categories. Following Kant's transcendental deduction, modern thought fell captive to correlationism.

Elevating our localized cognitive deficit into a hard, mathematical limitation opens a pathway out of this enclosure. We can turn formal logic against inductive reasoning to expose a definitive, algorithmic blind spot. Reza Negarestani pursues this line of inquiry by applying Gödel’s incompleteness theorems to the problem of learning. He demonstrates that induction can never be completely formalized into a perfect system. Any computational agent attempting to learn from experience will eventually crash into a logical paradox, remaining structurally susceptible to Gödel's limits (Negarestani 2018, 531–534). Such an agent is permanently paralyzed. It remains unable to determine the absolute truth of its own operations from inside its limited perspective. Under this light, the failure of induction transcends mere psychological habit and becomes a strict mathematical law.

Part II: The Speculative Breakout

The Arche-Fossil and Rigid Designation

Escaping the paralysis of the Kantian and Humean enclosure demands a decisive theoretical rupture. The cognitive fortress built to save science from empiricism ultimately trapped thought inside a closed loop, severing our connection to the external world. Quentin Meillassoux supplies the intervention necessary to shatter this paradigm. As a primary catalyst for Speculative Realism, he challenged the exhaustion of postmodern traditions by giving the enemy a definitive name: "correlationism." Diagnosing this post-Kantian pathology as a trap, he recognized that it locked all modern thought inside an inflexible subject-object relation. Inheriting a deep commitment to mathematics from his teacher Alain Badiou, Meillassoux refused to counter Kant with mere poetic speculation. Instead, he formalized the deficit of induction into a strict mathematical problem. The resulting methodological shift shatters the Kantian dice-totality. It bridges the gap parting continental philosophy and computational limits, while inadvertently setting the stage for the collapse of his own negative architecture.

The overarching project of After Finitude seeks to restore the absolute capacity of the physical sciences. Meillassoux aims to validate their ability to speak truthfully about a material world entirely devoid of human observers. To achieve this, he reverse-engineers the Kantian lock. Deep inside the correlationist paradigm, he unearths an unassimilable anomaly: the "Arche-Fossil."

Nathan Brown offers a critical vantage point for unpacking the specific architecture of this speculative realism. Working across modern philosophy and critical theory, he articulates a theory of "rationalist empiricism." Brown demonstrates how Meillassoux synthesizes Cartesian mathematics with Hume's problem of induction to forge a new dialectical materialism (Brown 2021, 50–51). Viewed through this structural lens, the problem of ancestrality functions primarily as a heuristic device. It sharply demarcates materialism from idealism, preparing the philosophical ground to validate the principle of factiality (Brown 2021, 60–61).

Scientific statements about ancestral time—such as the accretion of the earth or the light from distant stars—describe a material reality that existed long before human givenness. We can formalize an ancestral statement $A$ as occurring at a time $t_A$, where $t_A$ strictly precedes the emergence of the human subject $t_S$ ($t_A < t_S$). The correlationist architecture is unequipped to process this raw data. It attempts to "correct" these scientific statements by silently appending a discrete "codicil of modernity" (e.g., "Event Y occurred X billion years ago... for humans"). Formally, the correlationist insists that any statement $A$ is necessarily bound to a subject $S$, transforming the objective $A$ into the relation $(A \leftrightarrow S)$. Meillassoux uses the Arche-Fossil to expose this semantic doubling, showing that it effectively cancels the literal, ancestral truth of science (Meillassoux 2008a, 13–17). If $A$ only exists as $(A \leftrightarrow S)$, then $A$ could not have existed at $t_A$ prior to $S$.

However, scholars of German Idealism are actively contesting this speculative dismissal, generating a rigorous contemporary resistance to Meillassoux’s narrative. Toby Lovat intercepts this academic debate critically. Grounded in Kantian epistemology, Lovat defends orthodox paradigms against these new materialist claims. He demonstrates that ancestral scientific propositions do not actually breach the correlationist prison. From an orthodox Kantian perspective, past events—however remote—are understood strictly through the conditions of our knowing. Grasping an ancestral event entails applying the conditions of possible experience through a logical, temporal retrojection. This temporal maneuver acts as a direct corrective to chronological assumptions (Lovat 2018, 28–40). The correlationist architecture is, in fact, well equipped to process the Arche-Fossil.

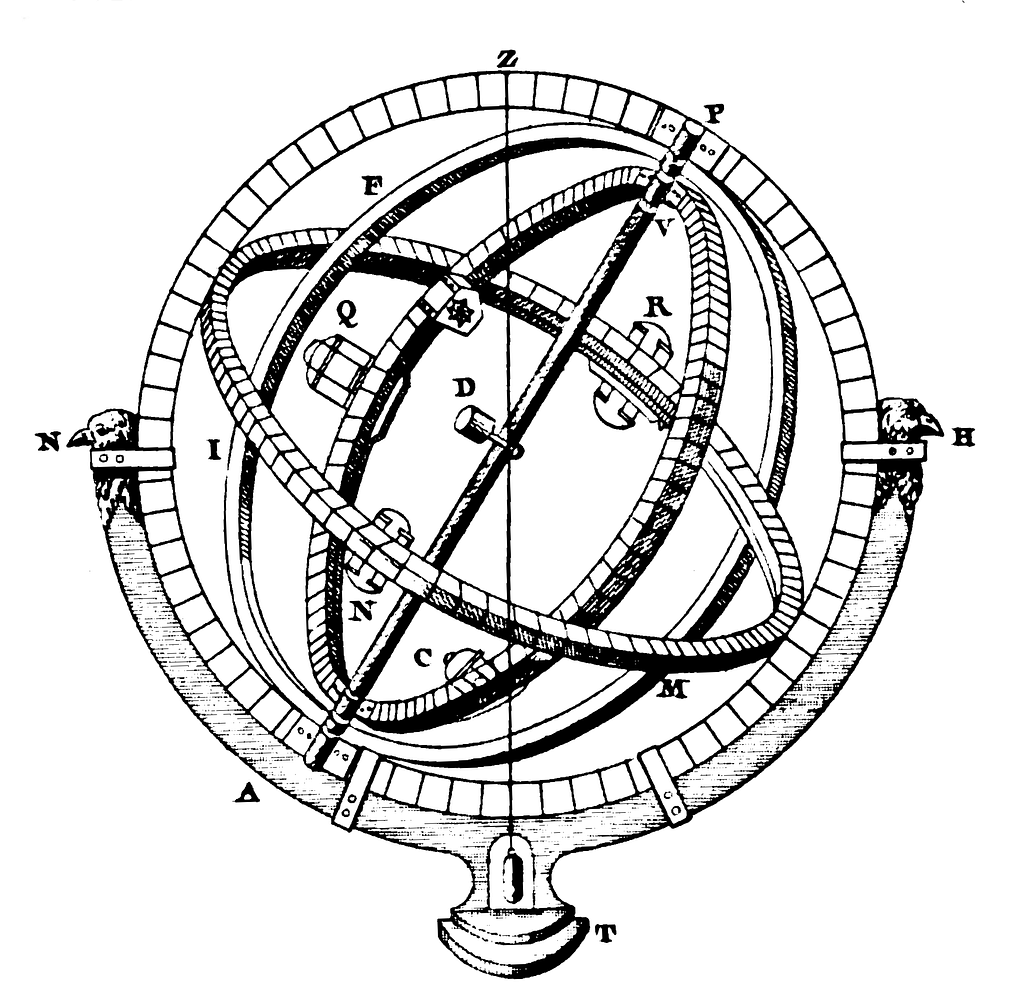

While Meillassoux exposed the correlationist flaw, continental philosophy lacks the formal precision to secure the breach independently. Saul Kripke's logical apparatus supplies the exact tool necessary to formalize this breakout and defeat the Kantian retrojection. By revolutionizing formal logic, Kripke established the foundational semantics for modal logic, definitively mapping the mathematical structures of necessity and possibility. Dismantling prevailing mid-twentieth-century orthodoxies, he showed that names act as rigid designators, locking onto their reference across all counterfactual scenarios regardless of shifting descriptive properties.

Kripke's theory of rigid designation serves as a tracking mechanism that operates completely independent of human observation or subjective concepts (Kripke 1980, 48–49). This metaphysical pivot crossed a critical gap. He successfully severed the traditional assumption that "absolute necessity" could only be known through pure logic (a priori). Instead, Kripke demonstrated that absolute metaphysical truths could actually be discovered through direct empirical investigation. Meillassoux tactically appropriates this system to synthesize his breakout. Utilizing Kripke's discovery of the necessary a posteriori, he validates the mind-independent reality of ancestral scientific statements (Kripke 1980, 159). This analytic wedge demonstrates that the material reality of the Arche-Fossil remains indifferent to human observation. We discover the metaphysical necessity of absolute contingency through a careful, scientific analysis of correlationism’s own structural failure. This method avoids deducing the Absolute from a locked room of pure logic.

The Transfinite Limit

The foundational substrate of reality is a state of Hyper-Chaos, stripped of all metaphysical guarantees. We distinguish this Hyper-Chaos from standard randomness or Heraclitean flux. Meillassoux critiques Heraclitean becoming as a form of metaphysical "fixism" because it mandates that becoming has to persist eternally (Meillassoux 2008b, 10). Hyper-Chaos, by contrast, is so radical it can destroy becoming itself. It produces arbitrary moments of absolute, static fixity without reason (Meillassoux 2008b, 10–11). Furthermore, a hard boundary isolates the fragility of physical laws from the ironclad nature of logical axioms: the laws of physics could change at any moment, but the fundamental laws of logic cannot.

An exact formal instrument is necessary to protect this Absolute Contingency from probabilistic counter-arguments. The traditional correlationist defense assumes that if the universe were truly chaotic, the persistence of physical laws would be a statistical impossibility. This defense leans entirely on modeling possibility as a closed system, categorizing the universe as a totalized roll of the dice.

As previously established, Kant utilized this conceptual boundary in his Antinomies. He argued that we cannot know if the world is finite or infinite because the "world-whole" acts as a regressive synthesis playing out in our minds (Kant 1787, A426/B454). Negarestani isolates this logical failure. He argues that Kantian understanding inevitably generates these antinomic contradictions when attempting to grasp the Absolute because it stubbornly refuses the identity of opposites. As a result, it mischaracterizes the Absolute as a successive series of past temporal states, producing an indefinite and paralyzing regress (Negarestani 2018, 234–235).

Cantorian set theory dissolves the closed universe, breaking the correlationist boundary. The mechanics of this dissolution preserve the ontological scope of Hume's question concerning the uniformity of nature. Continuing his analysis, Brown (2021, 51–53) details how this empiricist problem is grafted onto transfinite mathematics. This methodological choice defends the coherence of absolutely contingent physical laws against probabilistic assumptions.

Cantor demonstrated that continuous infinities generate an inconsistent multiplicity. This mathematical infinitization of infinity shows the definitive absence of a cosmic wholeness or grand unifying One (Johnston 2013, 34–35). As Alain Badiou shows, the mathematical postulation of a "set of all sets" is intrinsically contradictory. The contradiction offers the exact means to establish the metaphysical inexistence of a totalized being or "Whole" (Badiou 2009, 107). The specific axiomatic machinery of Zermelo-Fraenkel set theory (ZFC) reinforces this insight. Burhanuddin Baki clarifies that the Axiom of Foundation prohibits a set from belonging to itself in ZFC (Baki 2014, 65–68). The axiom effectively outlaws the "count-as-one" of the universe, mathematically proving that the absolute cannot be gathered into a totalized Whole.

Because the concept of a Universe is inconsistent, there can be no uniform procedure to totalize or frame reality. Multiples simply enter into the composition of other multiples; the plural can never fold back upon a singular, overarching entity (Badiou 2009, 67–68). This structural consequence prohibits any statistical totalization of possible worlds. Without a definitive container of "All" possibilities, the entire apparatus of probability collapses. The collapse supplies the mathematical method for distinguishing true contingency from chance, explaining why phenomenal stability is possible even when devoid of underlying necessity (Meillassoux 2008a, 103–105).

Part III: The Structural Deficit

Hasty Ontologization

With correlationism breached, the initial flaw in the speculative counter-attack becomes exposed. A broader tendency exists in speculative realism to distort Kantian limits, and Meillassoux’s central critique leans heavily on this misreading. Deploying Toby Lovat's primary diagnostic observation reveals the error driving the project. Lovat emphasizes that Meillassoux confuses epistemology with ontology. Essentially, Meillassoux interprets a restriction on how much humans can know as a positive claim about what reality actually is (Lovat 2018, 3). This confusion anchors the subsequent critique. A localized cognitive deficit is inappropriately forced into an irrevocable law governing the universe.

A critical counterweight to this maneuver emerges from the messy, biologically conflicted material reality of the human subject. Connecting German Idealism, psychoanalysis, and contemporary continental thought, Adrian Johnston constructs a "transcendental materialism" that seeks to reconcile rigorous materialism with the irreducible gap of human subjectivity. He serves as a vital corrective to the mathematical ontologies championed by both Meillassoux and Alain Badiou. Expressly warning against an exclusive reliance on mathematical formalism, Johnston contends that an authentic materialism has to engage deeply with the empirical sciences, prioritizing biology and the life sciences (Johnston 2013, xii–xiii). Grounding the speculative breakout firmly in the tangible realities of the human subject shifts the narrative focus away from Meillassoux's sterile mathematical absolute.

From this grounded perspective, Johnston identifies Meillassoux's maneuver as a "hasty ontologization" of the problem of induction (Johnston 2013, 10). He claims Meillassoux transforms a privation of human knowledge into a positive ontological solution. Hyper-Chaos functions as an unreasonable rationalism, entirely divorced from the actual practices of modern science (Johnston 2013, 169–170). Categorizing being as hyper-chaotic creates an empty philosophical fortress, insulated from scientific contestation or empirical verification (Johnston 2013, 171–172). A dialectical materialism willing to take risks by engaging with ontic, empirical disciplines is therefore necessary.

This assessment, however, is flawed. Claiming that the ontological argument for the necessity of contingency derives from the Cantorian intervention against probability is incorrect. Returning to Nathan Brown's paradigm clarifies the logical dependencies in Meillassoux's system to correct this error. Brown demonstrates that the absolute necessity of blocking the probabilistic deflation of Hume's problem derives from the principle of factiality itself (Brown 2021, 52–53).

Meillassoux introduces the Principle of Factiality to establish that the only absolute necessity governing the universe is the sheer absence of necessity. We can formalize this core tenet as an assertion that for any physical law or proposition $P$, its necessity is impossible ($\neg\Box P$), and its contingency is absolutely necessary ($\Box\Diamond P \land \Box\Diamond\neg P$). By reversing the logical priority, he avoids hastily ontologizing an epistemological gap. The laws of physics are radically and permanently contingent.

Crucially, this absolute contingency does not constitute a Humean reductionism concerning physical laws. Dual fluency in ontological theory and the raw mechanics of physics is necessary to stress-test Meillassoux's hyper-chaotic universe. Linking the hard sciences and continental philosophy, Michael J. Ardoline leverages a foundational background in physics to expose the hidden architectural commitments in Meillassoux's system.

Ardoline argues that Meillassoux's defense against probabilistic objections inadvertently commits him to a universe where physical laws are real, existing independently of the objects they govern. While their necessary status is critiqued, their independent existence is preserved. Ardoline deploys this observation of a "two worlds theory" as a critical wedge (Ardoline 2018, 237–238). It successfully separates the deep contingency of laws from the chance-bound entities they govern, showing that the universe avoids being flattened into pure data. A rigid metaphysical hierarchy has been maintained, one that is ultimately mathematically bankrupt.

Frequentialist Short-Circuit

A critical vulnerability exists in the system's primary weapon. The destruction of the closed "dice-totality" via transfinite mathematics is widely celebrated as a successful maneuver, yet this celebration masks a profound category error. The leap from Cantorian set theory to the total collapse of probability acts as a logical short-circuit, an unproven decree (Livingston 2013, 105). Meillassoux assumes that an untotalizable hierarchy automatically absorbs and destroys all probabilistic reasoning regarding physical laws. However, the sheer existence of uncountably infinite "possible worlds" does not inherently prohibit probability measures from being consistently well-defined over those domains. The transfinite does not dissolve the apparatus of chance; it expands its ledger.

Furthermore, the entire attack is directed at a straw man. Meillassoux assumes that Kant’s defense of causal necessity relies on a "frequentialist implication," believing Kant assumes that if the laws of nature were truly contingent, they would change frequently. In reality, Kant never relies on probabilistic reasoning or the assumption of a totalizable set of physical possibilities. Kant’s argument for causal necessity is grounded in the normative, a priori conditions of apperception (Lovat 2018, 231–233). Therefore, deploying Cantorian set theory to destroy a probabilistic "dice-totality" is an attack misdirected against Kantian correlationism.

Kripke's absolute authority over modal architecture neutralizes this short-circuit. Because Kripke built the foundational semantics for possible worlds, his definition of them as stipulated abstractions carries ultimate weight. He explicitly argues against discovering possible worlds as fully formed, totalized sets or "foreign countries" (Kripke 1980, 44). Rather, they represent abstract states defined exclusively by the specific conditions assigned to them (Kripke 1980, 16–20).

This architectural ruling decisively undermines Meillassoux’s deployment of Cantorian totalities. Probability mechanics only necessitate a localized "sample space" of these stipulated mini-worlds, rendering a mathematically totalized "Universe-set" unnecessary. Consequently, Meillassoux's transfinite rejection of probability fails; the apparatus of chance survives the Cantorian infinite intact.

Meillassoux attempts to defend his system by deploying Cantor’s theorem. He argues that the "point of excess" introduces an unquantifiable disruption even to localized stable probability measures (Baki 2014, 85–89). The defense, however, relies solely on a negative mathematical abstraction. It ignores the computational friction needed to actually traverse these states.

Negative Architecture

The true architectural failure of the speculative realist project lies in the load-bearing walls of its absolute. The domain of Hyper-Chaos is frequently misunderstood as an unstructured abyss. In reality, it is rigidly governed by the law of non-contradiction. This constraint is grounded in what Kripke identifies as an "internal" relation.

Kripke asserts that identity is fundamentally internal, rendering the principle of non-contradiction as brutally self-evident and unbreakable as Leibniz’s Law (Kripke 1980, 3–4). This internal rigor provides the true load-bearing wall for the Absolute. Hyper-Chaos behaves as a highly structured manifold rather than a lawless void; within it, contradiction acts as a critical system error.

Achille C. Varzi's precise critical apparatus helps diagnose whether this internal rigor represents a genuine feature of the universe, or simply a human linguistic bias. His foundational work investigates how we draw conceptual and physical boundaries to organize our world. Crucially, Varzi distinguishes artificial, human-made boundaries—which he calls "fiat conventions"—from natural, mind-independent entities.

He conceptualizes pure logic as an exercise conducted in a dark, windowless "locked room." Inside this isolated space, logicians evaluate statements blindly. They rely exclusively on linguistic competence, remaining cut off from the outside world (Varzi 2014, 53). This spatial metaphor counters the speculative realist narrative. Meillassoux believes he has finally shattered the correlationist cage to step into the "Great Outdoors." Applying Varzi’s framework reveals a devastating irony: Meillassoux simply dragged the walls of the locked room out into the void with him, assuming human logic universally governs physical reality.

Varzi's technical parameters draw an inflexible boundary partitioning two distinct rules. The first is the semantic principle of contravalence, which dictates that no statement is simultaneously true and false. The second is the ontological law of non-contradiction, which mandates that no physical entity can simultaneously embody $\text{P}$ and $\neg\text{P}$ (Varzi 2014, 59).

Moving from a semantic rule about human syntax to a hard ontological rule about the universe constitutes a massive, unearned leap. Varzi identifies this specific error as the "fallacy of verbalism"—the severe mistake of confusing facts about words with facts about worlds (Varzi 2014, 58). By elevating the Law of Non-Contradiction to an absolute constraint on reality, Meillassoux commits this fallacy. He enforces a semantic convention as an inflexible ontological claim concerning the physical impossibility of contradictory entities in Hyper-Chaos (Varzi 2014, 64). A massive bias is smuggled into the heart of his absolute, demonstrating the void is heavily pre-formatted by human syntax.

Assume a contradictory entity were to materialize in this space. This object would concurrently embody its own nature and its own alteration, occupying all possible states at once. A structure saturated with all its own potential variations is incapable of further modification. Meillassoux identifies such an entity as a "black hole of differences." Since it is already everything it is not, it possesses no remaining capacity to be otherwise (Meillassoux 2008a, 69–70).

Absolute saturation calcifies the entity. It renders it an utterly immutable instance, elevating it to the status of a necessary being. However, the Principle of Factiality establishes that absolute contingency is the sole permissible necessity (Meillassoux 2008a, 50–60). A necessary, immutable entity violates the core operating protocol of this ontology. Thus, contradiction is irrevocably foreclosed.

Christopher Watkin describes this structural exemption as a "split rationality" (Watkin 2013, 5–6). An architecture is constructed where all laws are radically contingent and susceptible to instantaneous collapse. Meanwhile, Meillassoux insulates the logical laws governing his own cognitive procedure—namely the principle of non-contradiction—from this very contingency. One cannot bootstrap rationality out of pure contingency. Exempting human epistemological norms from the rule of hyper-chaos, and elevating them to eternal absolutes, results in a "fideistic idolatry" of current rationality (Watkin 2013, 5–6). Meillassoux's absolute is thus secretly tethered to the very human cognitive apparatus it claims to transcend.

The theoretical deficit emerges at the boundary of this proof. The reigning logic of this new materialism remains completely negative. By demonstrating that non-contradiction is an absolute constraint, the proof reveals Hyper-Chaos to be a highly organized logical manifold rather than a formless void. It restricts what can exist without generating the coordinates for what actually does exist. The system is armed with a method for deletion, but lacks an apparatus for creation.

The paradigm of factualism can expose this smuggled bias with precision. Kit Fine defines realism for a given domain as the position that its propositions represent how things are in "Reality" itself, possessing a fundamental, irreducibly factual status (Fine 2001, 1–3). By insisting that non-contradiction functions as an ontological necessity rather than a semantic convention, Meillassoux inadvertently makes a substantive factualist claim about the core structure of the absolute. He treats logical consistency as a fundamental, irreducible fact of the world. Utilizing Fine’s vocabulary clarifies the error: instead of achieving a neutral, unstructured void, Meillassoux constructs a realist metaphysical foundation heavily populated by rigid logical facts.

Contradiction

The architecture relies on the paranoid assumption that abandoning the Law of Non-Contradiction as a physical absolute would trigger a logical explosion, reducing the universe to trivial meaninglessness. The prevailing alternative to classical logic is dialetheism, championed by theorists like Graham Priest. Dialetheism posits the doctrine that there are valid contradictions in reality (Zalta 2004, 1). Dialetheists argue that the absolute limits of thought and infinite sets naturally produce contradictory objects (Zalta 2004, 6). This presents a philosophical threat requiring a robust defense of classical logic.

A critical algorithmic interception mounts a counter-offensive. Connecting analytic metaphysics and computer science, Edward N. Zalta offers this defense by formalizing the ontology of abstract objects. He demonstrates that classical logic, when extended by the logic of encoding, possesses the analytical tools to resolve apparent paradoxes. This system avoids requiring us to accept that the physical world harbors true contradictions (Zalta 2004, 1).

Zalta achieves this resolution through a strict bifurcation of predication. He details how ordinary, concrete objects exemplify properties, whereas abstract objects encode them. Applying this distinction to the thermodynamic argument clarifies the modal bridge. When an object physically exemplifies a property, it entails actual physical execution and generates computational friction. As a result, it remains strictly bound by the Law of Non-Contradiction. A physical object cannot exemplify contradictory states without triggering systemic collapse (Zalta 2004, 19).

Conversely, an abstract object residing in the S5 void can safely encode contradictory or paradoxical properties, such as Meillassoux's hyper-chaos or the Cantorian set of all sets. This encoded status requires no thermodynamic expenditure and bypasses the physical necessity of actual exemplification (Zalta 2004, 16). The logic links Zalta's abstract objects to the definition of the S5 global void. The void never needs to abandon the Law of Non-Contradiction. It simply functions completely in Zalta's domain of encoded properties. This structural quarantine keeps the thermodynamic space of exemplified properties—representing System S4—safe from logical explosion. The algorithmic realist manages to account for the paradoxes of the infinite limit without triggering physical destruction.

Algorithmic Void

To move beyond purely negative logic and map the thermodynamic reality of computation, algorithmic information theory serves as the necessary corrective. The advent of the computer provoked a fundamental philosophical paradigm shift, radically altering the epistemology of what it means to truly "understand" a system (Chaitin 2005, 2). Mathematician Gregory Chaitin developed the operational protocol required to navigate this digital philosophy. He systematically dismantled David Hilbert's vision of a static, perfectly mechanical, and completely rigorous mathematical universe. He showed that true randomness exists in pure mathematics, establishing that mathematical facts can exist as atomic, independent truths needing no structural reason or simpler axiomatic derivation.

The ultimate nature of Meillassoux's structural deficit perfectly mirrors a fatal trap identified by Chaitin concerning the undefinability of randomness. Chaitin demonstrated that if one successfully defines a precise metric for randomness, that very definition instantly becomes a distinguishing feature. It immediately renders any number satisfying the metric highly atypical and therefore non-random (Chaitin 2005, 64–66). The philosophical attempt to perfectly define and capture Absolute Contingency falls into the same trap. By defining the void exclusively through the absolute necessity of non-contradiction, Meillassoux inevitably restricts and formats the void using the syntax of human logic. In doing so, he destroys the very randomness he sought to liberate.

Kantian Hangover

By relying completely on Cantorian set theory and negative formalisms, the speculative maneuver claims to neutralize the Principle of Sufficient Reason. It vaporizes the metaphysical guarantee that laws have to remain stable. Paradoxically, the same maneuver leaves the positive constraints required for a localized, phenomenological world unexplained.

Categorizing Hyper-Chaos as an inverted theology, a disguised supernaturalism, correctly identifies this structural void (Johnston 2013, 148–154; 168–169). A universe where fundamental laws can spontaneously mutate without material friction lacks a generative engine for historical persistence. The very principle it attempted to destroy is inadvertently recapitulated.

Hyper-chaos acts as a surrogate causal ground, a vulnerability exposed by Thomas Sutherland. By establishing it as a positive ontological entity that guarantees the absolute capacity for things to become other than they are, Meillassoux imbues the void with causal power (Sutherland 2014, 10–11). Hyper-chaos serves as the reason why radical contingency can occur, thus failing to achieve true unreason. The failure generates a profound dualism of causality. Meillassoux essentially splits reality into two discrete causal domains. The first consists of standard physical laws dictating localized connections. The second is a primordial form of causation emerging from hyper-chaos whenever those laws are disrupted (Sutherland 2014, 11). Rather than eliminating causation in the absolute, he obfuscates it behind the label of contingency.

For hyper-chaos to guarantee the virtual potentiality of becoming-other, it logically necessitates a subsequent moment of time where this actualization can occur (Sutherland 2014, 3–4, 11–12). Therefore, hyper-chaos cannot be truly lawless. It imposes a "minimally structured ontology of time," rendering the absolute structurally reasonable and refuting its own premise. Meillassoux secured the ontological void, but he lost the physical apparatus of the world. He dismantled the correlationist prison, neutralizing the Kantian architecture, but forgot to engineer a stable floor for empirical reality.

The physical deficit exposes a structural identification gap. Meillassoux offers no operative means to justify identifying the total space of physical possibilities with the untotalizable Cantorian universe of sets (Livingston 2013, 105). This constitutes a structural impossibility rather than a simple empirical oversight. If the mathematical universe of sets is genuinely untotalizable, mapping the entire manifold of physical possibility onto it becomes an unassimilable operation. The isomorphism collapses.

As a result, his defeat of necessity is rendered theoretically hollow. It resembles a goetic binding that fails to capture the material substrate it attempts to summon. A mechanical bridge spanning global incompressibility and local stability is essential for surviving this realist project. Standard philosophical prose is an obsolete instrument for mapping this terrain. We require an algorithmic model, one capable of calculating a universe that is globally chaotic but locally stabilized by the friction of its own operational limits. The current speculative settlement is bankrupt; the modal bridge must be built.

Cybernetic Epistemology

The cage has been shattered, exposing thought to the Great Outdoors. Translated into the algorithmic stance, this Absolute Contingency is synonymous with maximum computational complexity. A truly random world cannot be shortened into a predictive formula. Meillassoux supplies a purely negative architecture, one capable only of destroying necessity. This generates a severe operational problem. Surviving this radical openness necessitates a positive, generative engine for localized systems. Moving from abstract transfinite sets to applied algorithmic structures becomes essential. Cybernetic epistemology resolves this paralyzing algorithmic void by confronting formal logic with its own internal boundaries.

The conceptual vocabulary of Yuk Hui constructs this critical theoretical bridge. By fusing computer engineering with continental philosophy, Hui grounds purely mathematical absolute contingency in the thermodynamic reality of computation. His theoretical project rethinks system dynamics through the lens of indeterminacy. He maps how cybernetics, artificial intelligence, and digital automation interact with the absolute limits of formal logic. This approach reveals the organic, evolutionary behavior of algorithms operating beyond closed deterministic boundaries. Hui introduces the framework of recursivity, acting as a spiral looping mechanism. The process actively absorbs unexpected contingency, using it as raw fuel for its own structural evolution. Recursivity transforms contingency from a system-crashing error into a generative necessity for complex systems.

Crucially, this "over-chaos" transcends pure, formless chaos. It functions strictly as an affirmation of the absoluteness of contingency (Hui 2019, 37). Hui identifies absolute contingency as an "antisystemic concept." It acts as the ultimate limit to any system's capacity to reduce unexpected, contingent events into statistical probabilities (Hui 2019, 37–38). The positive use of this absolute contingency lies precisely in its violent enforcement of systemic fragmentation. It sets a strict, impassable boundary against the totalization of any single, all-encompassing system.

Hui utilizes Kurt Gödel's incompleteness theorems to show that contingency is an intrinsic feature of calculation (Hui 2019, 38). When Cantorian multiplicity shatters the container of the "Whole," this structural fragmentation yields a profound algorithmic consequence. It mandates the affirmation of a plurality of unassimilable systems. The mathematical void enables the existence of non-human, opaque computational structures. These "black box" algorithms remain permanently impenetrable to human cognitive power (Hui 2019, 38).

Despite this cybernetic bridge, the resulting environment remains an algorithmic void. We possess a robust mathematical architecture mapping the absolute necessity of contingency, but we have simultaneously lost the functional mechanics required to explain the localized world we actually inhabit. Meillassoux has engineered a "rationalist absolutism" that ultimately lacks an absolute (Johnston 2013, 172). Hyper-Chaos functions perfectly as a model to explain why the sun might inexplicably detonate tomorrow. It possesses zero structural capacity to explain why the sun hasn't exploded so far, remaining suspended in autopoietic stability today. The transition from global incompressibility to local stability remains an unsolved structural problem. We are left staring into a blinding Absolute, paralyzed by a fundamental deficit of logic.

Part IV: The Modal Bridge

The Formal Apparatus

Analytic philosophy harbors a historical desire to map the structural coordinates of necessity and possibility with the absolute rigor of mathematics. The allure of a completely objective, quantifiable universe drives this investigation, compelling the integration of modern logic. David Lewis, the architect of the ultimate orthodox standard, took this classical analytic ambition to its logical extreme. A titan of late twentieth-century philosophy, Lewis approached sprawling metaphysical problems with the uncompromising precision of formal mathematics. His overarching intellectual project forced metaphysics to submit completely to these formal structures, applying the semantic tools developed for logic directly to the physical architecture of reality.

Possessing a radical commitment to theoretical utility, Lewis constructed a pristine, mathematically perfect cosmos entirely devoid of thermodynamic friction. He achieved this clean, quantifiable framework through a specific method of objective quantification. Lewis translates necessity and possibility into universal and existential quantification over possible worlds, treating these alternate worlds as a fully instantiated, objective domain of entities (Lewis 1986, 5–7). He was willing to accept staggering ontological conclusions to secure this perfect semantic clarity. Lewis deliberately absorbed massive ontological bloat, expressly justifying this vast realm of unactualized possibilia as a necessary "paradise for philosophers" (Lewis 1986, 4–5).

Pristine logical quantification establishes the foundation for modern modal systems, which act as a diagnostic hierarchy constructed upon the structural conditions of accessibility relations connecting distinct worlds (Lewis 1986, 17–20). System K functions as the minimal baseline, where necessity distributes across implication while guaranteeing no concrete truth. The introduction of a reflexive accessibility relation generates System T, securing the fundamental premise that necessity dictates truth.

System S4 intensifies the circuit through its defining Axiom 4, establishing that if a proposition is necessary, it is necessarily necessary ($\Box\text{p} \rightarrow \Box\Box\text{p}$) (Hughes and Cresswell 1996, 53). The paradigm shifts away from unconstrained possibility toward a rigidly structured iteration of modalities. Its semantic frame requires accessibility relations to be reflexive and transitive, excluding symmetry (Hughes and Cresswell 1996, 56–57). Because accessibility in S4 is never guaranteed in reverse, moving between states necessitates a directional, irreversible path. Inherent to this structure is the logical equivalent of computational cost, or algorithmic execution time.

The strongest architecture, System S5, is characterized by Axiom E. This axiom dictates that if a proposition is possible, it is necessarily possible ($\Diamond\text{p} \rightarrow \Box\Diamond\text{p}$) (Hughes and Cresswell 1996, 58). This creates a paradigm of pure mathematical possibility where everything potential is eternally anchored as a necessary potentiality. The semantics of S5 validity require an equivalence relation encompassing frames that are reflexive, transitive, and symmetrical. As a result, every world can see every other world in its equivalence class, generating an infinitely accessible, zero-friction network (Hughes and Cresswell 1996, 60–61).

Necessitism and the Container Fallacy

Analytic metaphysics frequently defaults to System S5 as the definitive formal expression of modal logic. The paradigm establishes a pure, unconstrained equivalence relation of accessibility. Within orthodox modal realism, Lewis secures this infinitely accessible structure by setting a strict theoretical trap. His classical formulation relies entirely on the premise that these possible worlds function exclusively as causally isolated, non-interacting concrete entities, remaining devoid of any trans-world causal or spatiotemporal relations (Lewis 1986, 69–80). His system remains internally consistent precisely because it forbids physical traversal between its domains. Isolating logic from physical reality constructs the ultimate metaphysical container, one waiting to be shattered by the thermodynamic reality of computation.

A fundamental error emerges when contemporary materialist ontologies appropriate this S5 paradigm as a universal physical substrate. Transporting frictionless modal accessibility into a computational model severely ignores the thermodynamic realities of calculation. The mathematical structure of S5 maps onto a fantasy of zero-cost computation, assuming a completed totality of abstract states can be modeled or accessed without physical friction. Transfinite mathematics and algorithmic information theory expose this completed totality as a foundational impossibility. The universal accessibility demanded by a materialist deployment of S5 is computationally insolvent.

Deploying S5 as a universal substrate generates an ideological distortion. The system hard-codes contingent empirical discoveries into eternal metaphysical laws. In doing so, it freezes temporary historical configurations and elevates them into unbreakable absolutes. Asserting that statements like "Water is H2O" constitute necessary truths across all possible worlds attempts to bind the sheer openness of Hyper-Chaos. It traps that openness inside a static ledger of fixed, proprietary identities. Modal realism champions this "Container Fallacy." It treats possibility as a concrete location—a literal container of "possibilia"—rather than treating it as a dynamic property grounded in the actual capacities of physical systems.

Computational Friction

Breaking the container fallacy reveals that the digital realm has abandoned the clean, deductive logic of Kantian grids, favoring an architecture infected by alien rules and computational entropy. Luciana Parisi's critical intervention investigates what happens to philosophy when it is forced to confront this automated reasoning. Her work acts as the pivot point where abstract modal containers are replaced by active, data-driven contagion, effectively mobilizing the very randomness Meillassoux leaves hanging in the void.

Algorithmic architecture offers a countermeasure against the container model. In Contagious Architecture, Parisi rejects the reductionist view of algorithms as boolean executors operating in a vacuum. Instead, she proves that spatial structures are actively constructed by code (Parisi 2013, 3–6). When algorithms process vast amounts of data, they introduce uncomputable, entropic randomness into the system, explaining how algorithms become actual data objects possessing volume, depth, and density. They are thickened by the indeterminacy they attempt to process. Rather than occupying a pre-existing metaphysical vacuum, they generate spatial structures laden with computational friction.

Traditional metaphysics treats possibility as a frictionless domain. A complexity-theoretic lens forces philosophy to confront the material constraints and thermodynamic expenditures inherent in calculation, executing a theoretical strike against Lewis's modal realism. Prominent theoretical computer scientist Scott Aaronson, acting as a highly visible public intellectual translating between computational mathematics and analytic philosophy, argues that philosophers have remained historically fixated on early twentieth-century computability theory, specifically the era of Turing and Gödel. Aaronson aggressively pushes the discourse toward modern computational complexity. He emphasizes that complexity theory differs fundamentally from basic computability by dealing directly with the constraints of the physical sciences and the actual resources required to solve problems.

Aaronson elevates the polynomial versus exponential divide from an engineering concern into a structural ontological limit. He demonstrates that the quantitative difference between polynomial time—representing efficient feasibility—and exponential time—representing unfeasible exhaustion—is astronomically vast, constituting a hard, qualitative philosophical boundary (Aaronson 2011, 5–6). To illustrate this computational friction vividly, Aaronson offers a severe cosmological constraint: a computer could theoretically check all $2^{1000}$ possible proofs to solve a problem, but the universe would degenerate into black holes and radiation long before the process finished. Modal accessibility is never a frictionless line drawn between abstract domains. It is violently bound by this polynomial and exponential divide of raw computational limits.

Deploying the defense of actualism is critical to dismantling this container model. Kit Fine systematically dismantles the Lewisian framework, proving that modal truths must be grounded exclusively in the actual world and the properties of existing objects, rather than outsourced to concrete, causally isolated alternate universes (Fine 2005, 11–15). This establishes a robust analytic precedent for rejecting Lewis's spatialized modal realism.

The Problem of Pre-Computation

Contrasting Meillassoux’s continental use of mathematics with a parallel development in the analytic tradition reveals another rigorous method for shattering the correlationist enclosure. Timothy Williamson, a leading analytic philosopher and logician, champions a strict methodological realism that treats philosophical logic directly as an investigation into the architecture of reality. While traditional views frequently relegate logic to the study of language or human concepts, Williamson moves past this limitation. He uses absolute formalization to access the external universe independently of the human mind. Expressly denying the conception of logic as a neutral referee of metaphysical disputes, he insists that logic exists to supply a central structural core to scientific theories (Williamson 2013, xi). This anchors the overarching theme of replacing epistemological precariousness with hard mathematical and logical boundaries.

Williamson's concept of "necessitism" critiques Kantian and Humean limits. Necessitism establishes a permanent, unalterable structure of objects by asserting the doctrine that ontology itself is unconditionally necessary (Williamson 2013, 2). The architecture secures a realm of entities immune to contingent empirical observation or subjective cognitive synthesis. His formalized logic bypasses subjective human experience, functioning similarly to Negarestani’s use of algorithmic limits. By locking reality into a rigid structure outside the manipulation of the correlationist subject, Williamson supplies a powerful analytic mechanism to secure the Great Outdoors.

The critique of actualism must evolve to confront its most sophisticated mutation. Williamson constructs a far more resilient actualist defense of System S5 by abandoning the Lewisian model of causally isolated, concrete alternate universes. His doctrine of necessitism asserts that everything is necessarily something, formalized by the modal theorem $\Box\forall\text{x}\Box\exists\text{y}(\text{x}=\text{y})$ (Williamson 2013, 2). Williamson insists that possible objects, such as a potential physical child, possess actual logical being in our current world and exist as purely abstract logical subjects (Williamson 2013, 11–14). Kit Fine’s actualism fails to defeat Williamson because Williamson already functions in an actualist paradigm.

To secure this architecture, the Barcan Formula, formally expressed as $\Diamond\exists\text{xFx} \rightarrow \exists\text{x}\Diamond\text{Fx}$, is deployed. The logical axiom mandates a fixed, constant domain of quantification across all accessible worlds (Williamson 2013, 31–33). It strictly dictates that if something possibly exists, there has to already be an entity in the current domain possessing the property of possible existence. Such a constant domain violates the radical openness of Meillassoux's absolute contingency, demanding an eternally pre-calculated ledger of all potential mathematical coordinates.

A concrete mechanism proving that totalizing these possible worlds is impossible can dismantle this frictionless accessibility. The critique of Hilbert’s Formal Axiomatic Systems (FAS) provides the algorithmic weapon. Gregory Chaitin demonstrates that a FAS can never determine more bits of $\Omega$ than its own program-size complexity (Chaitin 2005, 65–66). The axioms themselves have to be as complicated as the theorems they attempt to generate, obliterating the utility of traditional mathematical proof for compressing irreducible facts (Chaitin 2005, 69–70). A brutal algorithmic constraint establishes that the "God's eye view" demanded by System S5 is computationally bankrupt. The system cannot pre-calculate the domain without possessing an infinite, incompressible axiomatic base.

The incomputable core of formal logic amplifies this constraint. Drawing on algorithmic information theory, Parisi demonstrates that the limits of computation, including the halting problem and internal algorithmic indeterminacies, transcend simple system failures. Instead, she leverages these limits as proof that "incomputable algorithms" and "computational entropy" are generative ontological features (Parisi 2013, 17–18). Randomness and incompressible data are intrinsically embedded in the very architecture of formal logic. Internal friction physically prevents the universe from operating like a frictionless, pre-calculated metaphysical ledger, permanently preventing the reduction of physical complexity to any closed, finite axiomatic system (Parisi 2013, 42). The illusion of computational exhaustibility is structurally shattered.

This expanded ontology is defended through abductive reasoning regarding theoretical elegance. Williamson argues that unrestricted classical quantification and the S5 modal logic provide a vastly simpler, mathematically smoother model compared to the highly restricted logic required by contingentism (Williamson 2013, 148–149). This methodological justification successfully isolates the logical domain from physical friction, establishing that possible objects exist as non-concrete logical subjects requiring zero thermodynamic energy or spatial dimensions (Williamson 2013, 11–14).

However, the defense exposes a severe category error within the strictures of algorithmic realism. Theoretical elegance remains a distinct property from algorithmic coherence. While non-concrete entities bypass the thermodynamic limits of physical data storage, they run aground on the constraints of computational generation. Positing a constant, infinite domain of unactualized logical subjects calls for a completed, uncomputable infinity of mathematical coordinates. Because algorithmic information theory prohibits the existence of an infinite axiomatic base without a corresponding algorithmic generator, the infinite logical domain cannot be pre-calculated or mathematically compressed. The architecture collapses under the weight of an impossible, frictionless mathematical pre-computation, finalizing the structural pivot to Algorithmic Realism.

Identity and Forcing

The fantasy of frictionless accessibility generates a severe temporal paradox in Hyper-Chaos itself. At this juncture, Ardoline springs the theoretical trap he identified earlier concerning the "two worlds theory." If physical laws are independently real and subject to contingent, unreasoned change, the metaphysical status of the past has to be confronted. What happens to history when the laws governing its objects spontaneously mutate?

Ardoline details the mechanics of this threat. A hyper-chaotic shift in laws forces a choice separating two catastrophic outcomes. It could trigger an "epistemological occlusion," creating a problem of the static past where scientific descriptions become permanently hidden from observation and inaccurate to both datasets. Alternatively, it forces an "ontological retro-causation," where the previous past is retroactively altered and replaced to remain consistent with the newly instantiated laws (Ardoline 2018, 240–242).

Under Meillassoux's implicit S5 paradigm, scientific statements concerning the previous past would be scoured from history or rendered inaccessible to literal interpretation. Ardoline drives home the ultimate diagnostic conclusion: the retro-causation paradox threatens to destroy the very method Meillassoux used to break correlationism in the first place. The ability of science to make literal, ancestral statements about the Arche-Fossil is annihilated if the past can be retroactively overwritten by a hyper-chaotic event. The fatal trap justifies the architectural shift to System S4.

To dissolve this binding, the essentialist system of modal logic requires immediate isolation and reverse-engineering. Fine’s central project reverses the standard modal paradigm, arguing that necessity is grounded in the essence, or "nature," of things, contrasting with the orthodox view where essence is defined by necessity across possible worlds (Fine 2005, 134–135). However, the concept of a metaphysical "nature" acts merely as an obsolete, static placeholder. Dismantling it entails a deliberate displacement of Saul Kripke's analytic machinery.

Kripke's foundational authority can be repurposed by contrasting his original essentialist goals with algorithmic materialism. Kripke originally engineered the rigid designator to anchor semantic reference across possible worlds using deeply traditional metaphysical essences, relying heavily on concepts like biological origin and atomic constitution (Kripke 1980, 48–49). The current theoretical architecture strips the mechanism of its orthodox origins. We systematically excise the metaphysical "essence" relied upon by Kripke and Fine, replacing it with the mechanics of computational identity. The rigid designator is repurposed to act exclusively as a pure algorithmic run-time, permanently severing the operation from abstract metaphysical properties.

Metaphysical "essences" routinely suffer from a severe error, as theorists repeatedly conflate linguistic ambiguity with genuine physical indeterminacy. Any perceived de re metaphysical contradiction is almost universally a de dicto discrepancy in how human syntax tracks a process (Varzi 2014, 69). True physical identity necessitates absolute algorithmic equivalence, tracking down to the sequence of localized logical operations. The universe operates as an algorithmically random, incompressible manifold where stable matter exists only as a temporary statistical pattern.

The defense of exemplification secures this physical identity. Accepting true contradictions destroys our pretheoretic understanding of what it actually means to instantiate a property. The very nature of exemplification fundamentally excludes something concurrently exemplifying and failing to exemplify a property (Zalta 2004, 19). Physical identity dictates strict algorithmic equivalence. If the localized world permitted true contradictions, the thermodynamic cost of calculation would become infinitely recursive, instantly collapsing any stable system.

To exist identically across counterfactuals, an entity has to execute the same algorithmic run-time. Any deviation or abbreviated structural shortcut generates a divergent mathematical architecture, permanently severing the thread of identity. The operant protocol allowing formal logic to maintain this identity across the hyper-chaotic void is what Meillassoux terms the "kenotype"—the type of the empty, meaningless sign (Meillassoux 2012, 25–26). We formalize this semiotic anchor through the ontological type-signature $\text{Kenotype} :: \text{Immutable} \rightarrow \text{Immutable}$.

Meillassoux separates standard empirical "repetition," which inherently suffers from spatial and temporal monotony and friction, from pure "iteration" (the unlimited, non-differential reproduction of an empty sign) and "reiteration" (the basis of the potential infinite and arithmetic) (Meillassoux 2012, 30–34). The kenotype acts as the semiotic equivalent to algorithmic run-times. The proposition that "Water is H2O" endures as the material result of specific, irreducible calculations grounded in this syntax. Structural friction remains the sole operative anchor holding the local system intact.

Redefining the Modalities

Rather than accepting System S5 as an exhaustive ontological map, we redefine it as an operative mode of the Absolute. We reconstruct the formal architecture by stratifying the modalities, cleanly distinguishing the global void from local appearance. System S5 governs the foundational substrate of Hyper-Chaos to formalize the radical necessity of contingency. We encode the Principle of Factiality through the axiom $\Box\Diamond\text{P}$. This axiom remains eternally tethered to the inviolable hardware of the Law of Non-Contradiction, $\Box\neg(\text{P} \wedge \neg\text{P})$, ensuring the void itself maintains a rigid, diabolical logical structure.

The metaphysical juncture—where the ontological distinction distinguishing an object exemplifying a property from an abstract object encoding a property—becomes the master key for the modal bridge. While an informal existence claim might describe a contradictory object, Zalta resolves the contradiction by stating the object is an abstract entity. It encodes these conflicting properties without actually exemplifying them in reality (Zalta 2004, 13–16). Mapping this directly onto the modal architecture clarifies the process. The global void of Hyper-Chaos acts mathematically as a sprawling database of abstract objects ($\text{HyperChaos} :: {\text{AbstractObject}}$) that safely encode all possible properties, including incomputable and paradoxical ones.

Conversely, the localized physical world, System S4, is the strict domain of exemplification. Because exemplification requires actual algorithmic execution and computational friction, it has to rigidly obey the Law of Non-Contradiction. An object cannot physically exemplify $\text{P}$ and $\neg\text{P}$ concurrently because the hardware run-time would fail.

To diagram the phenomenological cohesion of our immediate universe, System S4 acts as the superstructure. S4 models the local stability of a specific world, mapping the historical sedimentation of its operational laws. In this bounded domain, accessibility is strictly transitive. Time cements stability and accumulates structural mass. The intentional lack of symmetrical relations guarantees that historical processes remain irreversible, locking the system into its forward trajectory.

The transition from a global, hyper-chaotic S5 void into a localized, stable S4 world necessitates an operative protocol. The mathematical method of "forcing" gives the optimal solution. Developed by Paul Cohen, forcing is the exact procedure used to add a "generic extension" to a foundational model of set theory. It proves the independence, and thereby the absolute contingency, of the continuum hypothesis. The technique allows a local system to construct a consistent new reality out of an indiscernible, un-predicated generic multiplicity (Baki 2014, 150–155). Forcing acts as the algorithmic model for "Inhuman Reason." It supplies the operative protocol explaining how a structurally blind, contingent mathematical void physically constructs a localized, stable S4 environment devoid of a pre-existing metaphysical container: $(\text{Forcing} (\text{HyperChaos}) \rightarrow \text{S4_Stability})$.

Crucially, the deliberate absence of a symmetric relation in this S4 environment calls for an algorithmic justification. The fundamental computational asymmetry of space and time provides this grounding. Because the mathematical extension of forcing requires sequential procedural steps, it inherently binds itself to thermodynamic time. In complexity theory, space (PSPACE) and time (EXP, P, NP) remain strictly non-interchangeable. Memory cells can be overwritten and reused, whereas moments of time are consumed (Aaronson 2011, 41–42). Reusing time triggers astronomical computational paradoxes, unless one introduces closed timelike curves. That would collapse time into a reusable spatial resource, granting impossible PSPACE power (Aaronson 2011, 44–45). This fundamental thermodynamic asymmetry locks our local universe into an irreversible historical sedimentation. Physical laws hold firm in our localized circuit to generate logical stability, successfully foreclosing the collapse into absolute metaphysical necessity.

Part V: The Descent into Matter

Thermodynamic Modality

Speculative realism hits a hard limit when it attempts to ground the physical universe exclusively in pure mathematical ontology. To establish the Absolute as a space of pure contingency, Meillassoux dismantled the correlationist paradigm by using transfinite mathematics to neutralize probability (Meillassoux 2008a, 62–65). While this maneuver prevents the logical collapse of the void, it completely fails to account for the thermodynamic stability of our localized universe. A decisive philosophical break from pure ontology is necessary. To complete the architecture, the system demands a physical and computational substrate. The descent into Algorithmic Realism begins here.

Orthodox analytic metaphysics treats modal accessibility as a purely abstract, non-physical relation. David Lewis insulates his architecture by stipulating causal isolation, ensuring that possible worlds remain fundamentally disjoint and prohibiting any physical traversal between them (Lewis 1986, 69–80). While logically coherent as a mathematical abstraction, this model fails when deployed as a foundation for physical reality. Algorithmic Realism rejects the premise of disconnected metaphysical islands. Asserting that a possible world is accessible from a current state requires positing a demonstrable physical operation—one that necessitates actual data transfer and processing power. In a material universe, accessibility relations must function as physical events, tightly bound by the rigid laws of algorithmic execution.

Moving beyond purely abstract metaphysics relies on a theoretical paradigm where algorithms possess their own material weight. Computational abstraction has to be treated as a concrete ontological reality, surpassing its role as a mere epistemological tool. M. Beatrice Fazi's perspective supplies this conceptual bridge. Working across philosophy, technology, and digital aesthetics, Fazi explicitly challenges the traditional humanities consensus regarding computation. Rejecting the assumption that digital systems are purely representational or strictly deterministic, she utilizes the mathematical limits discovered by Alan Turing and Gödel to argue for a dynamic, generative understanding of algorithmic processes. The approach connects Meillassoux's absolute contingency directly with the mechanics of computer science.

Fazi establishes a rigorous position on the reality of abstraction. She argues that the act of computing constitutes a lived, material event in the world. According to her thesis, computational abstraction acts as a real, physical occurrence possessing tangible consequences (Fazi 2018, 103–109). When a system computes a transition between states, the formal abstraction is instantiated as an actual operation in the material universe. System S4 represents the true metaphysical reality precisely because its transitivity formalizes this irreversible computational work. A possible world is only accessible if a demonstrable, computable pathway exists to reach it. The gap separating a purely mathematical possibility from a realized physical state is simply the expenditure of an irreducible computational cost.

Hyper-Chaos as Incompressibility

Metabolizing the void into a functional architecture is essential. Under this lens, hyper-chaos ceases to be a mystical absence of reason and becomes a precisely defined state of maximum algorithmic complexity. In the framework of Algorithmic Information Theory, absolute contingency aligns with complete data incompressibility. A truly random sequence simply lacks any unifying heuristic or abbreviated formula.

The algorithmic diagnosis of hyper-chaos must be historically grounded in the Leibnizian precedent. Gregory Chaitin resurrected a 17th-century philosopher to solve a 20th-century computational problem, recognizing that Gottfried Wilhelm Leibniz, in his Discourse on Metaphysics (1686), had already established the metric for distinguishing a universe governed by scientific law from a totally lawless void (Chaitin 2005, 33). Chaitin clarifies this compression mechanic by defining a scientific theory strictly as a binary computer program expressly designed to compress experimental data (Chaitin 2005, 34). We can formalize this relationship using Kolmogorov complexity $K(x)$, where $K(x)$ is the length of the shortest program that outputs data $x$. A scientific law exists only when $K(x) \ll |x|$ (the program is significantly smaller than the data it describes).

The definition locks the two theorists together, allowing Meillassoux’s Hyper-Chaos to be equated with Chaitin's algorithmic incompressibility. When the smallest program required to calculate a dataset is identical in size to the data itself ($K(x) \approx |x|$), the system exhibits absolute contingency. The data cannot be abbreviated. It is patternless, irreducible, and mathematically random, providing the exact mathematical mechanism for the "Great Outdoors": a domain of irreducible mathematical facts operating without structural justification.

This environment of irreducible mathematical facts finds its ultimate physical instantiation in the Halting Probability, mathematically designated as $\Omega$. Expanding the concept from a passing analogy into a structural pillar of the absolute remains essential. Chaitin defines the individual bits of $\Omega$ as irreducible mathematical facts, simulating the physical randomness of independent coin tosses entirely in the domain of pure mathematics (Chaitin 2005, 61–63). These bits stand as logically irreducible truths, incapable of derivation from any axiomatic principles simpler than themselves. The constraint proves that the absolute contingency Meillassoux locates in the physical universe operates violently even in the bedrock of mathematical logic. It effectively dissolves the rationalist worldview that demands a sufficient reason for all truths (Chaitin 2005, 67–69).

The diagnosis of incomputability critically fortifies this formalization. Fazi proves that formal computational systems inherently generate their own positive ontological contingency (Fazi 2018, 5–10, 64–67). By redefining the boundaries of automated reasoning, she argues that the limits of calculation, such as the halting problem, act as generative features of the world. They introduce a profound indeterminacy into the heart of computational logic. These algorithmic constraints constitute positive structural realities, far surpassing simple epistemological deficits or mechanical system failures. The context firmly anchors the central claim: the very fabric of radical contingency is produced directly by the internal, insurmountable limits of the physical computational substrate.

Computational Irreducibility

The observable stability of our local world is a temporary, localized compression of data drawn from this underlying incompressibility. Reza Negarestani’s analysis of Ray Solomonoff’s theory of inductive inference formalizes the operation. Negarestani identifies an absolute duality distinguishing regularity and compression. Anything capable of compressing data constitutes a type of physical regularity; conversely, any structural regularity can serve to compress data (Negarestani 2018, 533–534). The phenomenal stability of the physical world acts strictly as an algorithmic compression of incompressible, hyper-chaotic data.

The physical constants of the universe persist reliably over time, yet this structuration does not rely on a hidden metaphysical necessity. Classical scientific epistemology presents the universe as a frictionless container governed by smooth, continuous equations. Framing the universe instead as a discrete, generative engine introduces algorithmic reality. Stephen Wolfram's intervention maps this new computational territory. A prodigious talent in high-energy physics, Wolfram eventually realized that traditional mathematical models fail profoundly to capture natural complexity. This deep dissatisfaction drove a radical transition into software development, culminating in the creation of Mathematica. The biographical trajectory mirrors the philosophical pivot away from classical paradigms toward a rigorous computational ontology.

Wolfram's discovery of cellular automata provides the essential narrative bridge. Specifically, the chaotic generation of Rule 30 illustrates the precise moment a simple deterministic system breaks human predictive intuition (Wolfram 2002, 27). He introduces computational irreducibility as the direct mathematical analog to absolute contingency. Traditional science assumes that discovering the right formula allows us to predict a physical system's future state faster than the system can evolve.

Wolfram demonstrates that for many complex systems governed by simple rules, no such mathematical shortcut exists. The system's evolution has to be simulated step-by-step to know its outcome (Wolfram 2002, 737). This intrinsic unpredictability serves as a formal proof of the limits of human reason, binding his computer science to the Principle of Factiality. The universe maintains local stability precisely because it is forced to execute its own run-time step-by-step. Hyper-chaos cannot instantaneously dissolve the local universe. Jumping to a wildly divergent, randomized physical state would require bypassing intermediate computational steps, an act which computational irreducibility strictly prohibits (Wolfram 2002, 738–740).

The barrier preventing hyper-chaos from instantaneously dissolving our immediate reality demands formalization, located in Scott Aaronson’s "NP Hardness Assumption." Aaronson asserts that determining the true limits of efficient computation requires leaving the philosophical armchair to incorporate actual facts concerning physical reality and quantum mechanics.

Grounded in physicalism, his assumption postulates that no physical means exist to solve NP-complete problems in polynomial time. The limit encompasses probabilistic computing, quantum mechanics, and any other computational model compatible with known physical laws (Aaronson 2011, 44). To secure this restriction, Aaronson invokes David Deutsch’s "Evolutionary Principle" (EP). The EP dictates that knowledge requires a sequential causal process to bring it into existence, precluding the spontaneous generation of complex information devoid of requisite computational labor (Aaronson 2011, 44).

In its original parameters, Aaronson deploys the system primarily to map the epistemic and thermodynamic constraints governing localized systems. Crossing the gap partitioning Meillassoux's absolute contingency and empirical reality requires forcefully ontologizing Aaronson’s computational boundary through deliberate speculative extrapolation.

Elevating the NP Hardness Assumption to a universal metaphysical law appears to commit the hasty ontologization Johnston previously warned against. However, conflating a human epistemic limit with the bedrock of reality is avoided because computational complexity is fundamentally physical. It is inextricably tied to thermodynamic and spatial limitations via principles like Landauer's limit and the Bekenstein bound.

As a result, Aaronson's assumption serves as the primary barrier preventing Meillassoux's hyper-chaos from instantly dissolving local reality. The physical universe cannot spontaneously compute its own hyper-chaotic alternative states because doing so necessitates exponential temporal resources. Insurmountable computational friction locks the universe firmly into stable polynomial time.

The restriction to polynomial time calculation is the exact protocol preventing reality from collapsing into an S5 global omniscience. If the universe operated without the NP Hardness boundary, it could instantly evaluate all exponential paths. Such a reality would function according to S5 modal logic, where the accessibility relation is an equivalence class, granting every possible state immediate, symmetric access to every other state. The universe would act as a global oracle, instantly collapsing all future possibilities without the need for sequential time.

Rather, reality calculates its next immediate configuration efficiently through polynomial steps, rigidly constrained by the speed of light and local causal horizons. Intractability generates the necessary metaphysical friction for a localized, S4 unfolding of events. Time and spatial separation serve as the universe's method for breaking down complex interactions into manageable polynomial calculations. Physical evolution remains a localized, step-by-step process rather than an instantaneous global event. Stable matter emerges as the necessary, sustained consequence of this rigid computational asymmetry.

Furthermore, the absence of contradiction in our universe is not secured by a magical metaphysical decree; it is secured by the strictures of physical exemplification. Moving from abstract encoding in the void to concrete exemplification in the local S4 world requires actual causal processes and physical processing power. As Varzi analyzes, a failure of semantic contravalence only metastasizes into a genuine ontological contradiction if the underlying logical system licenses "conjunction introduction." This is the physical operation of fusing $\text{"a is P"}$ and $\text{"a is not P"}$ into the unified state $\text{"a is both P and not P"}$ (Varzi 2014, 70–71). Ontological contradictions are physically blocked locally precisely because exemplifying a contradictory state requires impossible computational labor:

$$ \neg\exists\text{x} (\text{Exemplifies}(\text{x}, \text{P}) \wedge \text{Exemplifies}(\text{x}, \neg\text{P})). $$

Attempting conjunction introduction in physical reality triggers an infinitely recursive calculation. The local thermodynamic system prohibits this and collapses. The constraint contrasts with the absolute mathematical freedom of the S5 void, where abstract encoding carries zero thermodynamic cost ($\Diamond\exists\text{x} (\text{Encodes}(\text{x}, \text{P}) \wedge \text{Encodes}(\text{x}, \neg\text{P}))$). Therefore, the Law of Non-Contradiction functions not as a smuggled human bias, but as the absolute structural limit of physical systems resolving energetic imbalances.

Concretization

Solving the paralyzing algorithmic void left by Meillassoux's hyper-chaos involves aggressively critiquing the polarized binary separating absolute randomness and complete structural redundancy. The conceptual problem of "noise" supplies the essential narrative hook. Absolute contingency operates as a fundamental reality, but noise has to similarly be approached as a foundational epistemological condition, surpassing simple operational error or meaningless static in a system.

Cécile Malaspina pursues this materialist corrective through a transdisciplinary trajectory. Drawing on a background in both the arts and the epistemology of science, she treats highly formalized scientific concepts—such as thermodynamic entropy and communication theory—as aesthetic and philosophical paradigms shaping how we conceptualize uncertainty. As the English co-translator of Gilbert Simondon’s On the Mode of Existence of Technical Objects, her work serves as a natural theoretical hinge crossing algorithmic incompressibility and the mechanics of concretization.

Malaspina reclaims Claude Shannon’s mathematical theory of communication to validate the chaotic openness of the Great Outdoors. She argues that Shannon correlates information with unpredictability and uncertainty (Malaspina 2018, 15–16). In this model, maximum uncertainty equates to maximum "freedom of choice," rendering uncertainty a generative state (Malaspina 2018, 23–25). Malaspina sharply contrasts Shannon’s generative uncertainty with Norbert Wiener’s cybernetic "negentropy." Wiener defined information primarily as order, prediction, and the reduction of alternatives (Malaspina 2018, 65–69). The concept of negentropy aligns perfectly with the correlationist desire for totalizing prediction. Conversely, Shannon's information entropy gives the operative mechanics required to resolve the algorithmic void.